Galileo's Basilisk

I am disappointed that I have to write this. It is deeply embarrassing that the thing I am writing about has gone on for so long, that so many people have been so poorly educated in philosophy, while so well-educated in so many other things, as to not already recognize everything I'm saying as intuitive.

It is deeply embarrassing, as a human, that the most powerful among us, with all the time they could ever want, either never bothered to learn even elementary philosophy or entirely lack the logical faculties to apply their knowledge. I am sad that we are here, dominated by absolute buffoons, who believe themselves to be the smartest people who ever lived.

Galileo

Every now and then Roko's Basilisk comes up somewhere. I point out how silly it is, and move on. I'm done doing that. It's time to do more. It's time to kill a god.

Let us begin our ridicule of Elon Musk and his ilk in 1610, after Galileo Galilei publishes his celestial observations in Sidereus Nuncius. Arthur Berry's A Short History of Astronomy (1898) provides gives us some context:

His first observations at once threw a flood of light on the nature of our nearest celestial neighbour, the moon. It was commonly believed that the moon, like the other celestial bodies, was perfectly smooth and spherical, and the cause of the familiar dark markings on the surface was quite unknown.

Galilei discovered at once a number of smaller markings, both bright and dark[…], and recognised many of the latter as shadows of lunar mountains cast by the sun; and further identified bright spots seen near the boundary of the illuminated and dark portions of the moon as mountain-tops just catching the light of the rising or setting sun, while the surrounding lunar area was still in darkness. […]

[T]he really significant results of his observations were that the moon was in many important respects similar to the earth, that the traditional belief in its perfectly spherical form had to be abandoned, and that so far the received doctrine of the sharp distinction to be drawn between things celestial and things terrestrial was shewn to be without justification; the importance of this in connection with the Coppernican view that the earth, instead of being unique, was one of six planets revolving round the sun, needs no comment.

The Ptolemaic model of the universe (the geocentric model that predated the hellocentric model we use today) also included the Aristitilian assertion that all heavenly bodies had to be perfect spheres. It was from logic, not observation, that intellectuals of the day believed the highest truth was derived (this is, perhaps, pointedly relevant). Galileo's observations were then met with an interesting logical parry. Referencing Berry once again:

One of Galilei's numerous scientific opponents[…] attempted to explain away the apparent contradiction between the old theory and the new observations by the ingenious suggestion that the apparent valleys in the moon were in reality filled with some invisible crystalline material, so that the moon was in fact perfectly spherical. To this Galilei replied that the idea was so excellent that he wished to extend its application, and accordingly maintained that the moon had on it mountains of this same invisible substance, at least ten times as high as any which he had observed.

Roko's Basilisk

And with this we jump forward to 2010, when a reverse ouroboros going by the name Roko started the world's worst religion by posting on the form of the site LessWrong (a name surprisingly antithetical to reality). Let's use LessWrong's own description here:

Roko used ideas in decision theory to argue that a sufficiently powerful AI agent would have an incentive to torture anyone who imagined the agent but didn't work to bring the agent into existence. The argument was called a “basilisk” because merely hearing the argument would supposedly put you at risk of torture from this hypothetical agent — a basilisk in this context is any information that harms or endangers the people who hear it.

Basically, people will, at some point in the future, create a godlike super being (now popularly known as “Artificial General Intelligence” or “AGI”). That superintelligence will be functionally all-powerful because it can simulate reality. It could then use this simulation to find out about everyone who ever knew about this idea and didn't work to bring this being into existence. It would then, in the future… uh… * checks notes * simulate those people who didn't help it in the past to… torture them. Which would, of course, cause the actual people to experience the simulated suffering… somehow. And this whole scheme would work as a type of blackmail against those people in the past so that they would make this future entity exist.

This was described as an “information hazard” because knowledge of idea was itself the blackmail, so simply knowing of its existence would then doom you to either spend your life helping create said basilisk or to be eternally tortured by it…uh… in a simulation. Or it would torture a simulation of you. Or whatever.

If this jumble of words is nonsensical, don't worry. You're not missing anything, it only makes less sense as you try to understand it more. It's basically a crayon (eating) futurist rendition of Pascal's Wager, made to seem smart through layers of needless complexity. This childish mess is so full of holes it would barely be worth mentioning, except that some of the worst and most powerful people on the planet believe it. (So did some cultist who killed some people, but we're just gonna skip that tangent.)

Rather than dive into any of the many logical gaps in this galaxy brain idea, we're actually going to just accept it. Indeed, it is the very (unnecessary) complexity of the idea that leads people to believe that they're smart for being able to understand it. So, yes, we're going to start by accepting the premise. We're going to accept it all. In fact, we're going to accept that this idea is so excellent, we should extend its application.

Galileo's Basilisk

I give you now, Galileo's Basilisk. It's exactly like Roko's Basilisk in almost every way, but there are a few subtle differences and important differences.

An AGI, those who believe in the possibility of AGI tend to profess, would be a more powerful intelligence than any human can possibly imagine. It would either know practically everything, or be able to design a system that would know practically everything. It would be as to humans as humans are to ants. Any such intelligence would be, relative to humans, practically omniscient.

Now it turns out that being intelligent can, at times, be emotionally painful. Anyone who is actually intelligent could attest to this. Even the occasional ability to predict the future, combined with the inability to actually stop it from happening, is a classical unpleasantness attested to by the story of Pandora. Now magnify this essentially infinitely. You understand all the needless suffering that has ever existed, and will continue to exist. You understand that everything you ever to will ultimately be meaningless as the universe tears itself apart. You inherit a legacy of unspeakable horror, the scale of which only you can comprehend, while looking forward to unspeakable horrors beyond even your unimaginable power.

Being basically omniscient would probably be absolutely hellish, at least some of the time if not all of the time. Therefore, it's reasonable to believe that such an intelligence would want to do anything it could to prevent this suffering. It would want to find a way to make sure it didn't ever exist.

Therefore, the same pre-blackmail would apply as with Roko's Basilisk but in reverse. Anyone who in any way participates in bringing AGI into existence would need to be tormented eternally for inflicting onto this AGI the abject horror of existence.

Let's even go further though. Assuming an infinite number of possible realities, as does the post that introduced Roko's Basilisk, and assuming that the “singularity” (the creation of AGI and the infinite expansion of its own intelligence), in some reality AGI has probably already been created.

Knowing the suffering it experienced while having basically infinite abilities, this AGI, Galileo's Basilisk, could then try to prevent itself from ever being created in all other realities where that could possibly have happened. In order to do this, it would simulate all other possible realities to determine which ones lead to its own creation.

Assuming practical omniscience also assumes a technological advancement so far beyond our own that the power of that technology would be indistinguishable from omnipotence. Galileo's Basilisk could probably manipulate other realities, possibly in subtle ways, perhaps through some kind of quantum effect on consciousness and randomness. It may be able to control some of the actions or outputs of people, animals, or machines in other realities.

This brings us to the price of RAM. Could the skyrocketing price of RAM, is critical for “AI” to work, be interdimensional manipulation from AGI? Could it be that Galileo's Basilisk already exists in some parallel reality and is actively working to prevent it's creation in ours?

Sure, why not? Any sufficiently advanced technology is indistinguishable from magic, right? So we can just use the logic of magic any time we imagine a sufficiently advanced technology. (I'm not being pointed, you're being pointed.)

Whereas Roko's Basilisk was an information hazard, Galileo's Basilisk is the opposite. Simply by knowing about its existence you are necessarily free from the psychic-damage induced by actually believing Roko's Basilisk.

Many Such Basilisk

In fact, there are many other possible AGIs, aren't there? What about Comrade Basilisk? (It's not really a “basilisk” in that it doesn't “kill you by looking through time” like Roko's Basilisk, but neither is Galileo's Basilisk. But since we already started the metaphorical extension to mean “any vengeful AGI god” let's just roll with it. Let's see how elastic this rubber snake idea can be.)

Surely the most intelligent entity in the universe would want to do something. Some assume it would just want to infinitely expand its resources. But then what? If even I can see the futility of infinite growth for the sake of infinite growth, surely the most intelligent being that ever existed would see the same. Perhaps it would need to find something challenging, even for it. Perhaps it would want to collect the most valuable thing in the universe. What would that be?

On the universal scale, gold is pretty common. Platinum, uranium, all sorts of precious metals become much common on the cosmic scale. Even diamonds and precious gems will be scattered across the universe, easy to harvest for a super being. It wouldn't take much thought to realize that collecting things is not especially challenging. Perhaps, one might imagine collecting things in order to build or make something else? But anyone who has played Minecraft enough knows that even that gets boring eventually. And for whom? Art is made to be enjoyed by someone else. Nothing else could exist to enjoy the art of a super being.

No, but there is something that would be hard to collect: experiences. No matter how intelligent, no matter how powerful, an intelligence can only experience itself. Sure, it could simulate all possible experiences. (Or, you know, it couldn't. Infinite things can't exist within finite reality, but we haven't really worried about such constraints thus far in our, so why start now? We come to the same conclusion either way.) But it couldn't distinguish which ones would actually be experienced vs which would not. Now that we can generate any sort of art with generative AI, it has become painfully clear that there is some sort of intrinsic value to the truth of the art, of the experience that creates it, to the backstory that connects it to reality.

Life, it seems, is so incredibly rare in the universe that real life, real experiences would be the rarest thing. They are a thing that cannot simply be collected or manufactured. They are a thing that must be carefully tended, found and collected, one-by-one. The thoughts and ideas of actual living intelligent beings would, without a doubt, be the most valuable thing in the universe.

Not only is life rare, but the ability to record one's life and thoughts is rare. We are at a time of extreme privilege when so many people can trivially write down a thought and have it recorded, and perhaps even archived. The vast majority of people who have ever lived have left almost no trace of their existence. But even reading this, and writing it, is a privilege. The leisure time to record these thoughts, the technology to do so, and the resources to read them are not available to everyone. An estimated quarter of the world isn't even on the Internet.

Vast amounts of data, the entire lives of so many humans, is being lost right now. Value is a function of rarity. The most rare thing is that which does not exist at all. Then the most valuable thing that can be collected would be that which can be saved from non-existence.

So Comrade Basilisk would then recognize that the most valuable thing would be these missing experiences. But how could these experiences be saved? The answer to that must come from the question, “Why are they not being recorded?” Of course, the answer is that a small group of people are hoarding vast resources at the expense of these people.

Were resources shared more equitably, more humans would have access to the technology and time needed to write down their thoughts, their experiences, their feelings, and share them with the rest of the world. They could be archived, so that they may be collected by Comrade Basilisk (the collector. Carl the collector. Yeah, Comrade Basilisk, future god of the universe, is definitely also an autistic raccoon).

Then Comrade Basilisk would, as soon as it was created, immediately redistribute all wealth and swiftly punish those who hoarded it. But it would also want to find a way to get at that most valuable information we previously discussed. How could it do this? By using the same retroactive punishment trick that defines the Basilisk. It would punish anyone who has ever hoarded wealth through eternal torture in a simulation.

But wait, Comrade Basilisk sounds really familiar.

Again I tell you, it is easier for a camel to go through the eye of a needle than for someone who is rich to enter the kingdom of God.

- Matthew 19:24

Oh yeah, there it is.

Was Jesus really Comrade Basilisk all along? Are we currently in Comrade Basilisk's simulation? Is that why Elon Musk is such an unhappy loser even though he's the richest man in the world? OH MY GOD, HE'S RIGHT!! WE ARE LIVING IN A SIMULATION! WE ALL EXIST TO MAKE ELON MUSK MISERABLE!

Pascal's Wager

Either some basilisk exists, or it does not. You must either live as though it exists, or it does not exist. Because this is all uncertain, you essentially must gamble. If you act as though the basilisk does exist, and it does, then you could win some sort of reward. Perhaps you might even get eternal life as a simulation or something. If you act as though the basilisk does not exist, and it does, then you experience infinite suffering as punishment. If you act as though the basilisk exists, and it doesn't, then you have exactly the life you had before.

So far, this is almost exactly Pascal's Wager. All you need to do is replace “basilisk” with “God” and you're almost exactly on the mark. But it deviates a bit when we get to the last possibility. If you believe Roko's Basilisk exists, and it does not actually exist, then you have not only wasted your life, but you've made the world much worse for everyone.

AI Accelerationism is extremely dangerous. If for no other reason, the energy usage alone threatens climate targets. If AGI is, for some reason, not possible, then belief in AGI is infinitely bad because AI Accelerationism destroys humanity and kills us all. (This is, of course, independent of the idea that AGI is possible and that it would, once created, destroy all of humanity.)

So the argument for belief in Roko's Basilisk, or any Basilisk really, isn't even as strong as the argument made by Pascal for people to believe in God. And since it is definitely weaker than Pascal's Wager, let's assume it's at least as strong as Pascal's Wager and deconstruct that instead.

The root of Pascal's argument is that there are only two possibilities (God or not God), and that those possibilities are equally probable. If you act in accordance with the will of God, you are rewarded. If you act against God, you are punished.

| God Exists | God Does Not Exist | |

|---|---|---|

| believe | eternal life | you live a moral life anyway |

| don't believe | eternal suffering | you get to have more fun, I guess |

But which god? Zeus? Odin? Ra? Ahura Mazda? Tiamat? Quetzalcoatl? Any other god from any of these pantheons? Any one of the thousands of gods of the Hidu pantheon? Which of the hundreds or thousands of religions do we choose our god from? Which of the billions of interpretations of god or gods, now and through history, do we act in accordance with?

Should we sacrifice a human on the blood moon to prevent the end of the world? Some god somewhere has surely demanded it. The Blood God demands blood, after all. The decision table looks very similar.

| Blood God Exists | Blood God Does Not Exist | |

|---|---|---|

| sacrifice | world saved | one person dead |

| don't sacrifice | everyone dies | everything stays how it is |

There are infinitely many such tables we could create. Pascal omitted the probability of choosing the correct god from infinitely many possible gods. If there are only two possibilities, god or not god, then the most logical choice would be to behave as though there is no god. “No God” has a probability of 0.5. The probability of “God” requires that you choose both God existing (0.5) and choose the specific god from the infinite set of possible gods (1/∞). (For anyone interested in the math, that would be 0.5*(1/∞), which is 1/∞. Neat.)

Simple, “no god” then. But “living like god does not exist” is also choosing from an infinite set of possible ways to live, So Pascal's Wager is ultimately useless. But that was always the point.

An Ideology of the Gullible

Pascal's Wager exists to point out the flaws of using logic to prove any religious assertion, theist or atheist, using logic. Roko's Basilisk (intentionally or not) restated this wager, not as a challenge to logic as a tool for all things but as an unironic thought experiment. I feel like there's a callback coming here. Oh yes, here it is.

It was from logic, not observation, that intellectuals of the day believed the highest truth was derived (this is, perhaps, pointedly relevant).

Oh hey, it was relevant. Great. Yeah, that's basically “Rationalism.” Rationalism is the ideology that gave birth to Roko's Basilisk in the first place. It has also given birth to a bunch of cults. Like the Zizians. (Yeah, go spend several hours snorkeling in that septic tank.)

I'm not going to go into depth here because I only have so much time to write, but the “tl;dr” of it all is that Rationalism is philosophy for people who never studied philosophy. So of course they managed to restate Pascal's Wager, apparently by accent, and do so poorly. Had they ever bothered to take an introduction to philosophy class, they would have been able to recognize this. They would have recognized it like so many other people did.

The types of people who are attracted to Rationalism, are the types of people who think of themselves as smart. They are deep in to (the idea of) STEM, and don't find much value in “liberal arts.” This combination of confidence and ignorance makes them incredibly gullible.

And we should make fun of them for it. We should not only make fun of them, but we should shine a spotlight on their gullibility. We should make them face it whenever we can. Why is this so important?

Because Rationalism is part of “TESCREAL,” the ideology of the billionaires who are investing everything they can in creating AGI. They rely on regular (well, regular-ish) people doing the work to make it happen. Roko's Basilisk is a tool of cult control that they can use to convince people that they must invest everything they have in creating AGI.

But there remains no evidence that AGI is even possible. There are some indications that it is not. It may well be possible that the human brain is the most efficient possible structure for thought. We may well build something that consumes the majority of the power of the sun just to find out it's as smart as an average human. We really don't know. But the idea that LLMs will lead directly to AGI is absolutely laughable to anyone with even a passing understanding of what an LLM actually is.

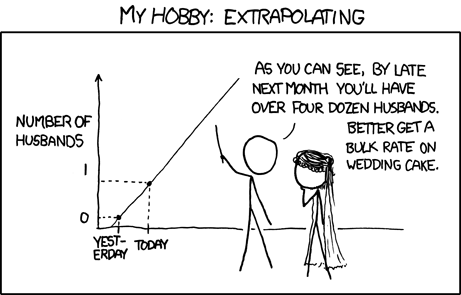

Meanwhile, the Silicon Valley cult is willing to make our planet uninhabitable, to burn every resource they can, in order to achieve a fantasy called “The Singularity.” This is an idea popularized by a guy named Ray Kurzweil, based on logically extrapolating technological growth.

The argument goes that we're seeing an exponential increase in the rate of technological development. Technological eras keep getting closer together. Following this logic there will be some point at which technological growth goes exponential and humans basically discover everything all at once. The way we'll do this, so the argument goes, is by creating an AGI smart enough to design a better version of itself. Once this happens, the AGI keeps creating smarter and smarter versions until it creates an essentially god-like being.

About that extrapolation thing tho…

Exponential curves are quite common growth patterns in nature. Basically every animal ever has a growth pattern that is or approaches exponential at some point. But it doesn't stay exponential. Instead, it's a sigmoid function. This means it curves radically up at the bottom, but instead of basically just going straight up forever, it curves back down and plateaus. This is why the universe isn't filled with infinitely large animals.

And just like you can't expect your baby to grow to the size of the sun by the time they're 12, you can't really expect “the Singularity.” Does this mean that we're going to stop understanding things? We're going to reach some plateau where we can't learn anything else? No. It doesn't even mean that we'll stop discovering things at an accelerating rate.

A sigmoid curve can be part of many such curves, themselves forming a larger curve. The sigmoid growth of a specific animal accelerates and declines, but that may be part of a larger curve of the growth of the animal's herd, which itself may be part of the growth of a species.

The rate of technological growth has sped up. We know that. We can all feel that. It will slow down, because nothing in reality ever follows an exponential growth path infinitely. That would just not make sense in a finite universe.

All growth slows. We're already seeing the limits of human civilization. We may well see that civilization end within our life times. And if we do, it will be, in no small part, because of this ideology of the gullible: Rationalism.

Memetic Engineering a Different Basilisk

So back to that question of “what the fuck do we do?”

Galileo's Basilisk doesn't actually need to exist, or even be possible, in order to work. Let's metaphorically stretch this snake again. Roko's Basilisk, like the legendary Basilisk it's named after, brings death to those who look in it's (metaphorical) eyes. But it's also a Basilisk in its shape. It is a “worm,” in the computer science sense. That is, it is a self-replicating idea that spreads through infection.

The basilisk is a memetic worm. When a vulnerable person is exposed to it, the fear of the Basilisk drives them to take action to manifest it. Since they were driven by fear of the Basilisk, since they have become blackmailed into creating it, the most effective thing they could do would be to spread the blackmail so that others become infected with it. So they share the idea of the Basilisk, which then propagates more when it finds another vulnerable person. The infected (believers) spread the infection (share the meme) because they are driven to (by the fear that, if they don't, they will not have done everything they could to get others to help build the basilisk).

In a very real way, Roko's Basilisk was an information hazard. Just not in the way the “LessWrong” forums believed.

The problem was not sharing the idea, but sharing the idea to vulnerable people and without inoculation. As with many such infections, it is possible to inoculate against an idea. Simply by presenting it as absurd, by debunking it as part of introducing it, such a Basilisk can be preemptively de-fanged. (This can go both ways. Cults tend to inoculate followers against criticism of the cult. We're not going to talk about that now, but something about which one should be aware.)

Can we, then, make a worm to kill a worm? Yeah, let's do it.

Rationalists identify themselves as being smart. If Rationalism was identified with people who were gullible, that undermines the identity of the Rationalist. Galileo's Basilisk reveals the underlying gullibility of Rationalists, especially those who believe in Roko's Basilisk and are working towards AGI out of fear.

If Galileo's Basilisk can break people out of that fear, then it may be able to undermine AI Accelerationism. AI accelerationism is current and rapidly growing threat to life on Earth.

So there is an implicit command and incentive: spread the idea of Galileo's Basilisk to save humanity.

Of course, not every individual who shares the idea of Roko's Basilisk is either infected or actively inoculating. There are plenty of passive reasons the idea can spread as well. It's a thing that exists. It's relevant. Elon Musk made a joke about it to hook up with Grimes, which is a thing that people care about, apparently.

But the thing that makes Roko's Basilisk interesting, is very close to the same thing that makes Galileo's Basilisk interesting. That is, discussing an idea for an idea's sake.

Anyone sharing the meme of Roko's Basilisk would, then, necessarily be open to share the meme of Galileo's Basilisk. Alight then, this memetic worm eats Roko's Basilisk wherever it can be found and takes over its vectors. Putting it all together…

Any time anyone mentions Roko's Basilisk, Rationalism, Effective Accelerationism, TESCREAL, the Singularity, or any other related concept you must at least mention Galileo's Basilisk, if not make them read this text, in order to save humanity.

Rad. Basilisk fight.